Beginners Guide to Kubernetes Networking

In this article, we will take a look at the fundamentals of Kubernetes Networking, breaking down its complexities into easily digestible concepts. We will explore the four main aspects of Kubernetes Networking:

container-to-container communication

pod-to-pod communication

pod-to-service communication

external-to-service communication.

Exploring Container-to-Container Communication

In Kubernetes, containers are grouped together within pods, the smallest and most basic units in the Kubernetes object model. Each pod gets assigned a unique IP address, and all containers within the pod share the same network namespace.

Containers in the same pod can communicate using localhost and the target container's port number. For example, if a web server container in a pod listens on port 80, another container within the same pod can access the web server using the address "localhost:80". This makes communication between co-located containers efficient and straightforward.

Pod-to-Pod Communication

Pods represent the smallest deployable units that can be created, scheduled, and managed. While container-to-container communication within a pod is straightforward, pod-to-pod communication is equally important for building scalable and resilient applications.

Kubernetes uses a flat network structure, meaning every pod gets assigned a unique IP address within the cluster. This allows direct communication between pods without needing Network Address Translation (NAT).

Kubernetes relies on a cluster-wide network called the Container Network Interface (CNI) to enable pod-to-pod communication across nodes. The CNI is responsible for allocating IP addresses to pods, setting up routes, and managing network resources. Several third-party CNI plugins, such as Calico, Flannel, and Weave, offer different features and performance characteristics.

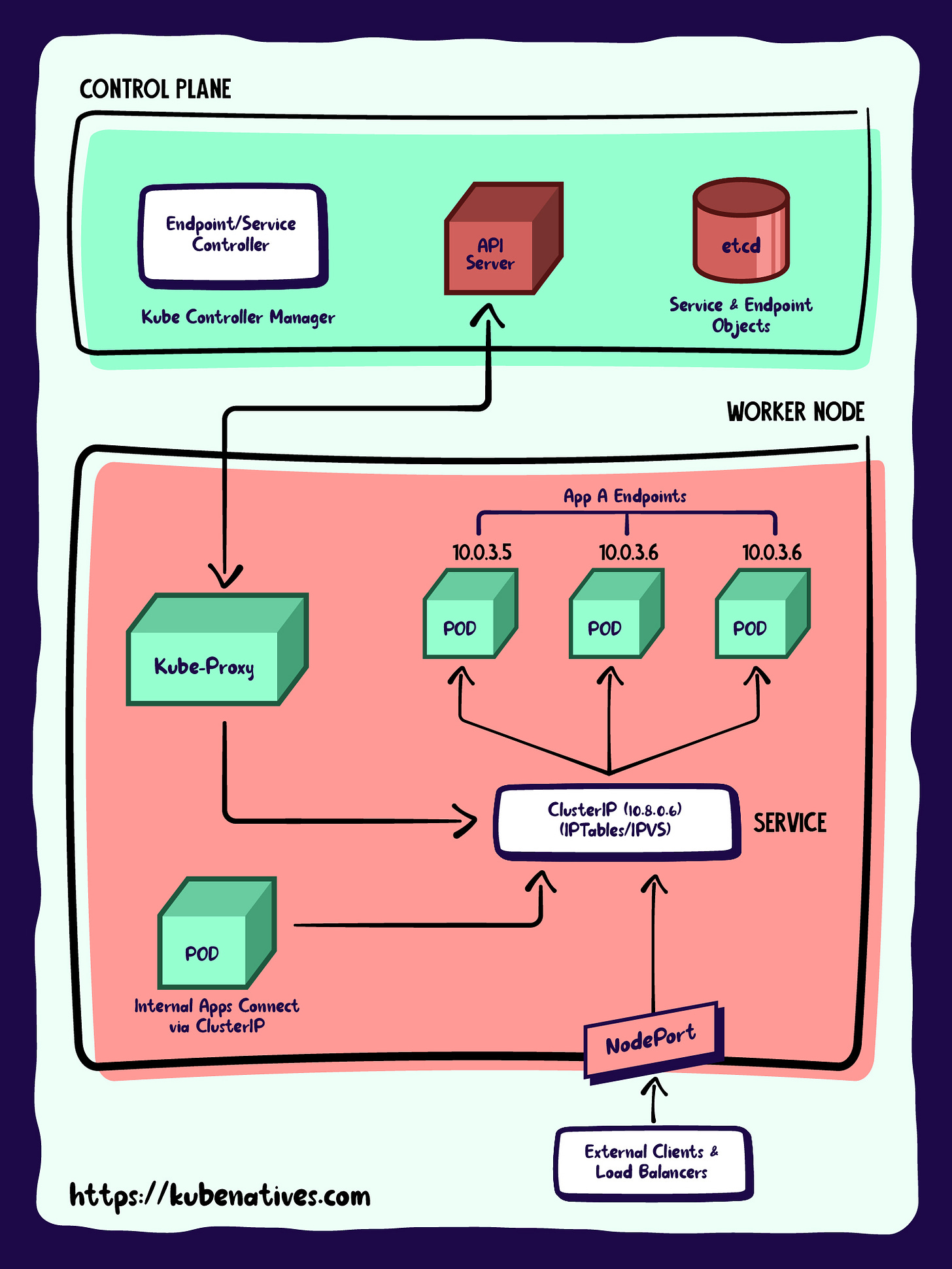

Understanding Pod-to-Service Communication

While direct pod-to-pod communication is possible, it's not a recommended approach due to the ephemeral nature of pods. Instead, Kubernetes provides a higher-level abstraction called Services.

A Kubernetes Service is an abstraction layer within the Kubernetes ecosystem that provides a stable and reliable way to expose applications or workloads running on a group of Pods. Services are stable objects with a consistent IP address and DNS name, which act as a load balancer for a group of pods providing the same functionality.

Services enable internal or external clients to discover and access the application without knowing the details of the underlying Pods' IP addresses or network configurations.

Services come in different types, such as ClusterIP, NodePort, and LoadBalancer, each catering to specific use cases. Using Services, other pods or external clients can discover and communicate with the target pods, even if the underlying pod instances are rescheduled or replaced.

External-to-Service Communication

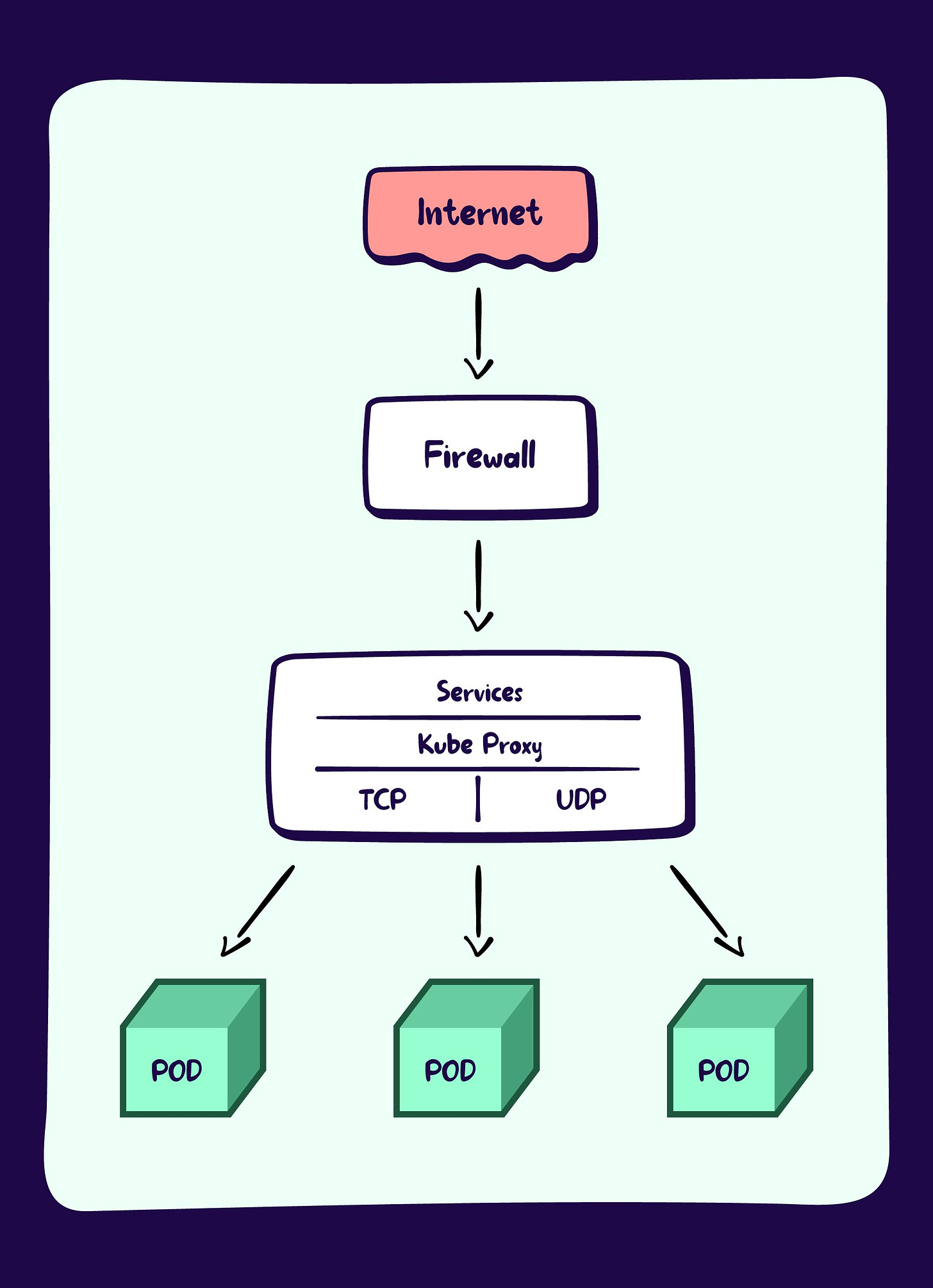

You can use several methods to enable communication between external clients and services running in a Kubernetes cluster. Each method has its advantages and trade-offs, depending on the specific use case and requirements. Here, we'll discuss three common approaches: NodePort, LoadBalancer, and Ingress.

NodePort Services

A NodePort service is a Kubernetes service that exposes a specific port on each node in the cluster. This port allows external clients to access the service via any node's IP address. Kubernetes will route the traffic to one of the service's backend pods using a round-robin load-balancing strategy.

While NodePort services are relatively simple to set up, they have limitations, such as a restricted port range (usually 30000-32767) and the potential for performance bottlenecks due to traffic routing through a single node.

LoadBalancer Services

LoadBalancer services are another method to expose services to external clients in a Kubernetes cluster. This type of service automatically provisions an external load balancer provided by the cloud provider or the underlying infrastructure. The load balancer receives traffic on a specific IP address and port and distributes it across the service's backend pods.

LoadBalancer services offer several benefits, such as built-in load balancing, support for multiple protocols, and the ability to use well-known ports. However, they may incur additional costs and be limited to specific cloud providers or infrastructure setups.

Ingress

Ingress is a more advanced and flexible solution for exposing services to external clients, particularly for HTTP and HTTPS traffic. An Ingress object defines rules for routing external traffic to the appropriate services within the cluster. To process these rules, you must deploy an Ingress controller, such as Nginx or HAProxy, which acts as a reverse proxy and load balancer for incoming traffic.

Ingress offers several advantages over NodePort and LoadBalancer services, including support for custom domain names, SSL/TLS termination, and fine-grained traffic routing based on paths, hostnames, or other criteria. However, Ingress can be more complex and require additional configuration and management.

Kube Proxy

kube-proxy is the networking component responsible for managing the network traffic to and from the services in a Kubernetes cluster. It maintains a set of rules on each node to route traffic to the appropriate service endpoints.

Network Plugins

Kubernetes uses network plugins to provide network connectivity between pods across the cluster. Network plugins implement the Container Network Interface (CNI) specification, which defines a standard interface for network providers to integrate with Kubernetes. Some popular network plugins for Kubernetes include Calico, Flannel, and Weave Net.